📘 Multiple Linear Regression

In real-world problems, outcomes rarely depend on a single factor. Instead, many variables influence the result. Multiple regression allows us to analyze the combined effect of several predictors.

🎯 Why Multiple Regression is Important

Most real-world predictions involve multiple variables.

Examples:

- House price depends on size, location, age, and number of rooms.

- Student performance depends on study hours, attendance, and prior knowledge.

- Sales depend on advertising, product price, and season.

- Machine learning model accuracy depends on training data size, feature quality, and algorithm complexity.

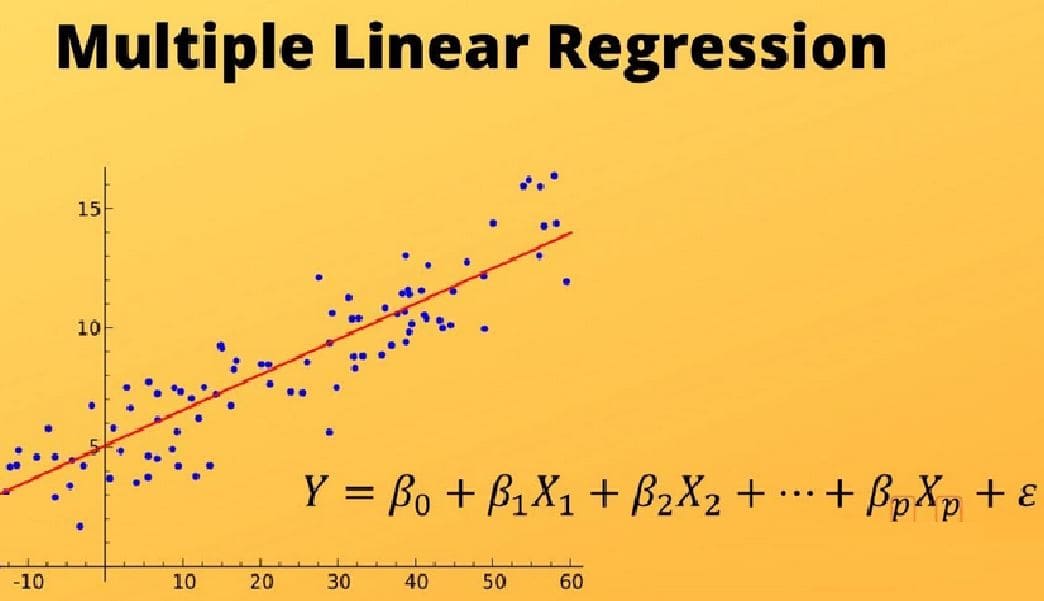

📐 Multiple Regression Model

The general form of the multiple linear regression model is:

\[ y = \beta_0 + \beta_1 x_1 + \beta_2 x_2 + ... + \beta_k x_k + \epsilon \]

Where:

- y = dependent variable

- x₁, x₂, ..., xₖ = independent variables

- β₀ = intercept

- β₁, β₂, ... = regression coefficients

- ε = error term

📊 Example — Predicting House Price

Suppose we want to predict house price using three factors:

- House size (square meters)

- Number of bedrooms

- Distance from city center

The regression model may look like:

\[ Price = 50,000 + 300(Size) + 10,000(Bedrooms) - 2,000(Distance) \]

Interpretation:

- Every additional square meter increases price by $300.

- Each additional bedroom increases price by $10,000.

- Every kilometer farther from the city reduces price by $2,000.

📈 Visualizing Multiple Regression

Unlike simple regression (which produces a straight line), multiple regression produces a plane or hyperplane.

- 2 predictors → regression plane

- 3+ predictors → higher dimensional surface

📊 Measuring Model Performance

Multiple regression models are evaluated using:

- R² (coefficient of determination)

- Adjusted R²

- Residual analysis

- Prediction error

Adjusted R²

Adjusted R² corrects R² when multiple predictors are used.

It prevents models from appearing better simply by adding unnecessary variables.

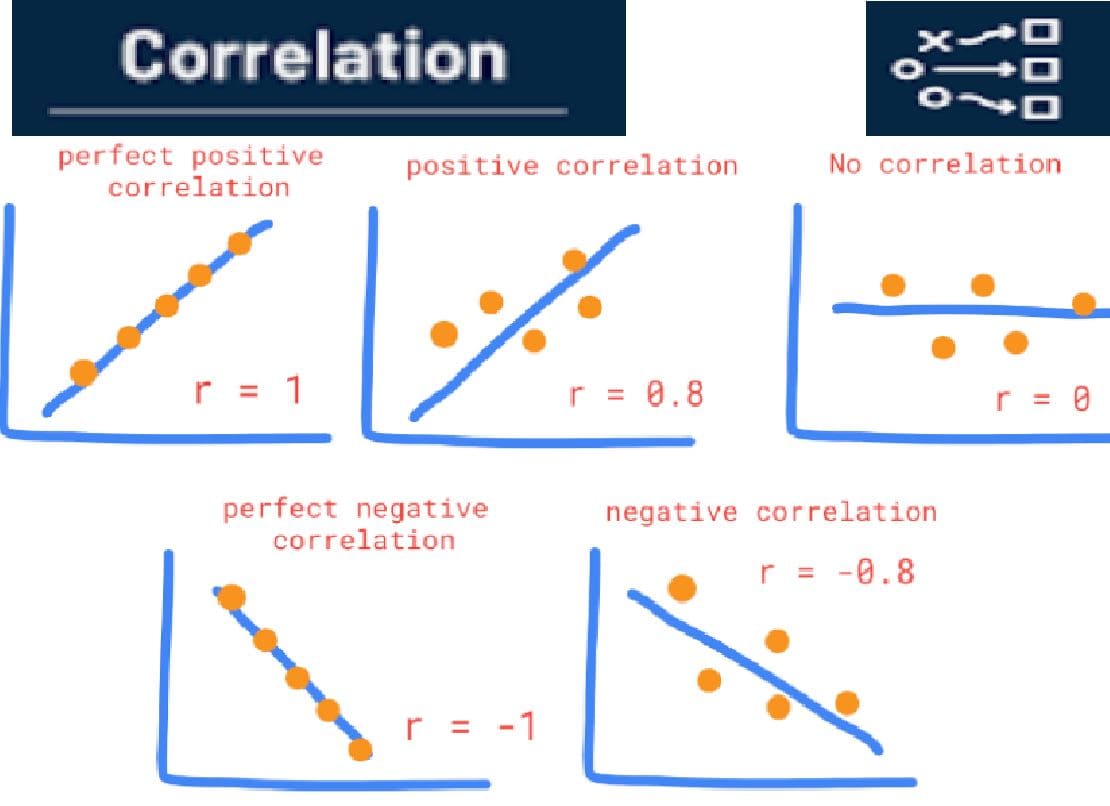

⚠️ Multicollinearity

Multicollinearity occurs when independent variables are strongly correlated with each other.

Example:

- House size and number of rooms may be highly correlated.

This can make regression coefficients unstable and difficult to interpret.

🤖 Multiple Regression in Machine Learning

Multiple linear regression is widely used in predictive analytics and machine learning.

- Economic forecasting

- Housing price prediction

- Marketing analytics

- Risk prediction

- Demand forecasting

🧠 Key Insights

- Multiple regression models relationships involving several variables.

- Each coefficient measures the effect of one predictor while holding others constant.

- Adjusted R² helps evaluate models with multiple predictors.

- Multicollinearity can affect interpretation of coefficients.

- Multiple regression is the foundation of modern predictive modeling.