📘 Bayesian Statistics: Introduction & Applications

Unlike classical statistics, which treats parameters as fixed but unknown, Bayesian statistics treats parameters as random variables that can be updated using observed data.

🎯 Core Idea of Bayesian Thinking

Bayesian statistics is based on the idea that our beliefs about a parameter should be updated whenever new evidence becomes available.

It combines:

- Prior knowledge (what we believe before observing data)

- Observed data (new evidence)

- Posterior belief (updated belief after observing data)

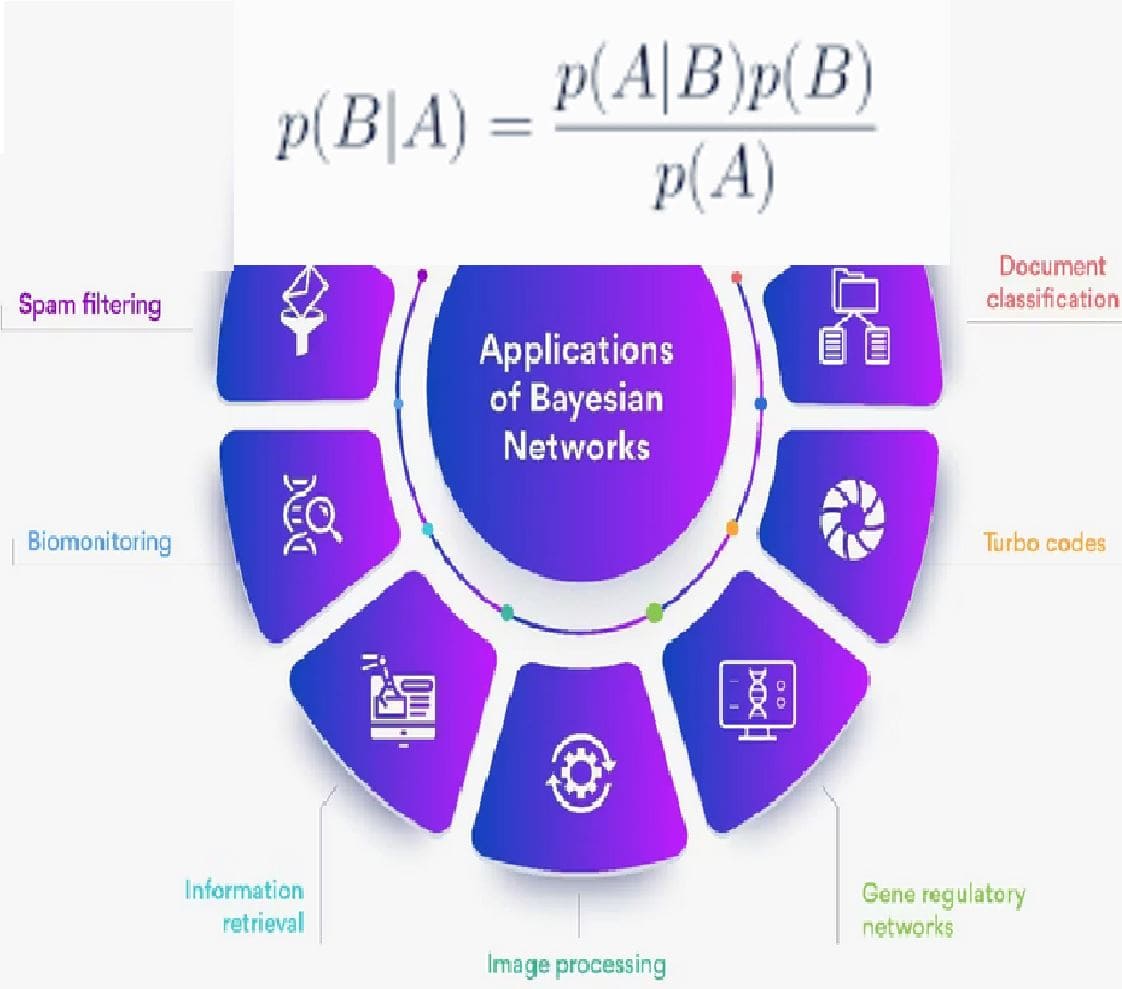

📐 Bayes' Theorem

Bayesian statistics is built upon Bayes' Theorem, which describes how probabilities are updated.

\[ P(A|B) = \frac{P(B|A) \times P(A)}{P(B)} \]

Where:

- P(A|B) → Posterior Probability

- P(B|A) → Likelihood

- P(A) → Prior Probability

- P(B) → Evidence

🧠 Key Components of Bayesian Inference

1️⃣ Prior Probability

Prior represents the belief about a parameter before observing new data.

Example:

- Suppose historically 1% of people have a certain disease.

- This becomes our prior probability.

2️⃣ Likelihood

Likelihood represents how likely the observed data is given a particular hypothesis.

Example:

- If a test correctly detects disease 95% of the time, this influences the likelihood.

3️⃣ Posterior Probability

Posterior is the updated probability after considering the evidence.

🔍 Example — Medical Diagnosis

Suppose:

- 1% of people have a disease → Prior = 0.01

- Test detects disease correctly 99% of the time

- False positive rate = 5%

A patient tests positive. What is the probability they actually have the disease?

Using Bayes' theorem:

\[ P(Disease | Positive) = \frac{P(Positive | Disease) \times P(Disease)} {P(Positive)} \]

After calculation, the probability might be around 16–20%, not 99%.

📊 Bayesian vs Classical Statistics

| Aspect | Classical Statistics | Bayesian Statistics |

|---|---|---|

| Parameters | Fixed but unknown | Random variables |

| Use of prior knowledge | Not used | Explicitly included |

| Inference method | Hypothesis testing | Probability updating |

| Interpretation | Frequentist probability | Subjective probability |

📈 Bayesian Updating Process

Bayesian learning follows a cycle:

- Start with a prior belief

- Collect data

- Update beliefs using Bayes' theorem

- Posterior becomes new prior

- Repeat as more data arrives

🤖 Applications in Machine Learning

Bayesian methods are fundamental in many ML algorithms.

- Naive Bayes Classifier

- Spam filtering

- Recommendation systems

- Medical diagnosis AI

- Bayesian neural networks

- Probabilistic graphical models

🔎 Example — Spam Email Detection

Suppose:

- 40% of emails are spam

- The word "free" appears in 70% of spam emails

- The word "free" appears in 10% of normal emails

If an email contains the word "free", Bayesian inference helps compute the probability that the email is spam.

This is exactly how the Naive Bayes spam filter works.

🧠 Key Insights

- Bayesian statistics updates probabilities using new data

- Prior beliefs combine with observed evidence

- Posterior probabilities represent updated knowledge

- Bayesian reasoning is widely used in AI and machine learning

- It provides a flexible framework for handling uncertainty