📘 Linear Regression — Foundations of Predictive Modeling

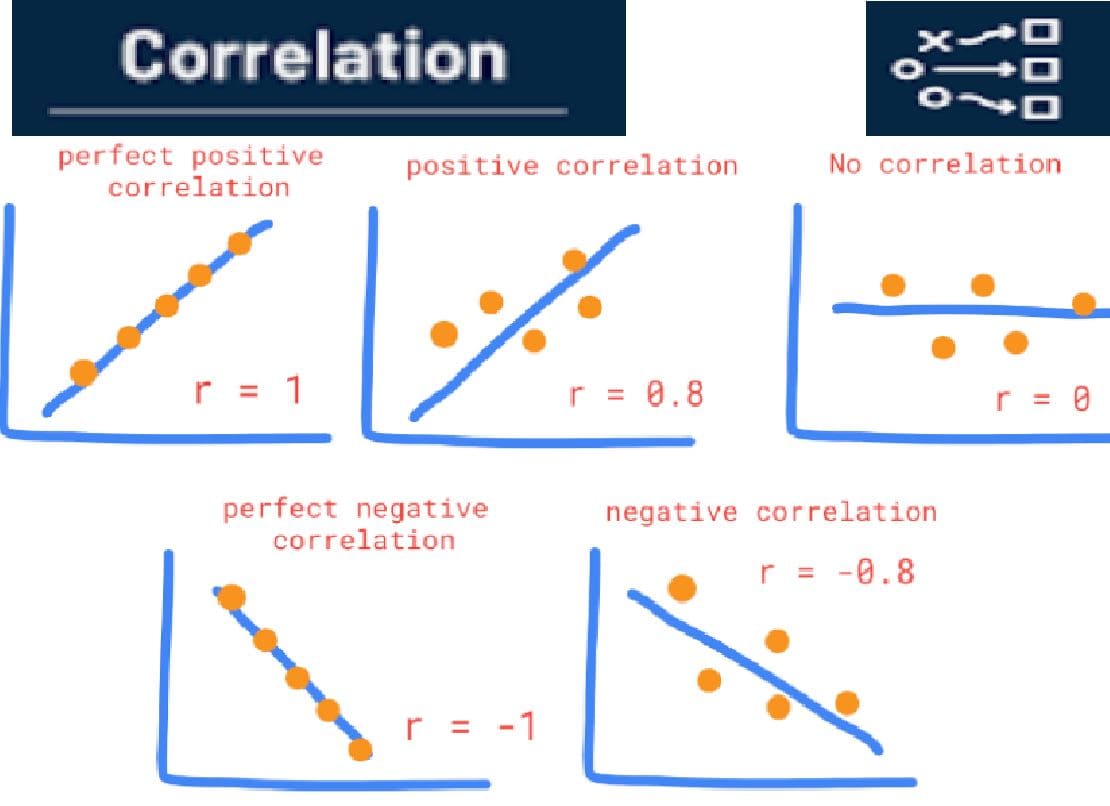

It helps us understand how one variable changes when another variable changes, and it allows us to make predictions based on that relationship.

🎯 Purpose of Linear Regression

Linear regression is used to answer questions such as:

- How does study time affect exam scores?

- How does advertising spending influence sales?

- How does house size affect house price?

- How does training data size affect ML model accuracy?

📊 Variables in Regression

Regression analysis involves two types of variables:

1️⃣ Independent Variable (Predictor)

The variable used to predict or explain another variable.

Example: Study hours

2️⃣ Dependent Variable (Response)

The variable we want to predict or explain.

Example: Exam score

📈 Simple Linear Regression Model

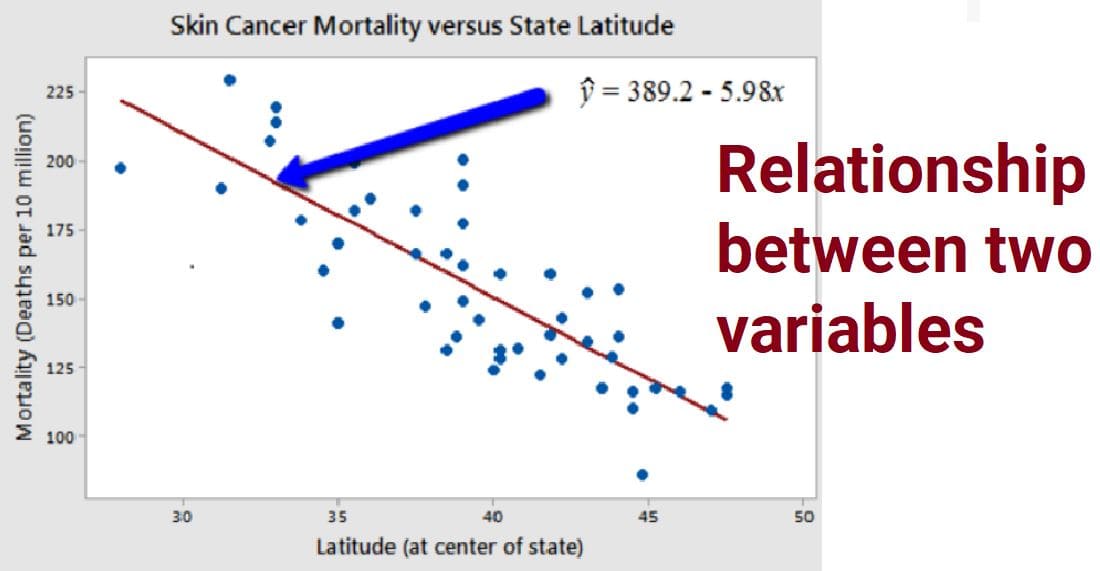

The relationship between two variables can be represented by the linear equation:

\[ y = \beta_0 + \beta_1 x + \epsilon \]

Where:

- y = Dependent variable

- x = Independent variable

- β₀ = Intercept

- β₁ = Slope (rate of change)

- ε = Random error

📐 Meaning of Regression Components

Intercept (β₀)

The value of y when x = 0.

Slope (β₁)

Represents how much y changes when x increases by one unit.

🔍 Example — Study Hours vs Exam Score

Suppose we collect the following data:

| Study Hours | Exam Score |

|---|---|

| 2 | 50 |

| 4 | 60 |

| 6 | 70 |

| 8 | 80 |

| 10 | 90 |

From the pattern we observe, the regression line becomes:

\[ Score = 40 + 5 \times StudyHours \]

This means:

- Every additional hour of study increases the score by about 5 points.

📊 Regression Line

The regression line is the best fitting straight line through the data points in a scatter plot.

It minimizes the total error between the observed data and predicted values.

📐 Least Squares Method

The regression line is calculated by minimizing the sum of squared errors.

\[ SSE = \sum (y_i - \hat{y}_i)^2 \]

Where:

- yᵢ = actual value

- ŷᵢ = predicted value

Squaring ensures that positive and negative errors do not cancel each other.

📊 Measuring Model Fit — R²

The coefficient of determination (R²) measures how well the regression model explains the variation in the data.

\[ R^2 = \frac{\text{Explained Variation}}{\text{Total Variation}} \]

R² ranges from 0 to 1.

| R² Value | Interpretation |

|---|---|

| 0 | No explanatory power |

| 0.5 | Moderate explanatory power |

| 1 | Perfect prediction |

🤖 Linear Regression in Machine Learning

Linear regression is one of the most widely used algorithms in machine learning.

- House price prediction

- Stock price forecasting

- Demand prediction

- Sales forecasting

- Risk prediction

⚠️ Assumptions of Linear Regression

- Linear relationship between variables

- Independence of errors

- Constant variance of errors (homoscedasticity)

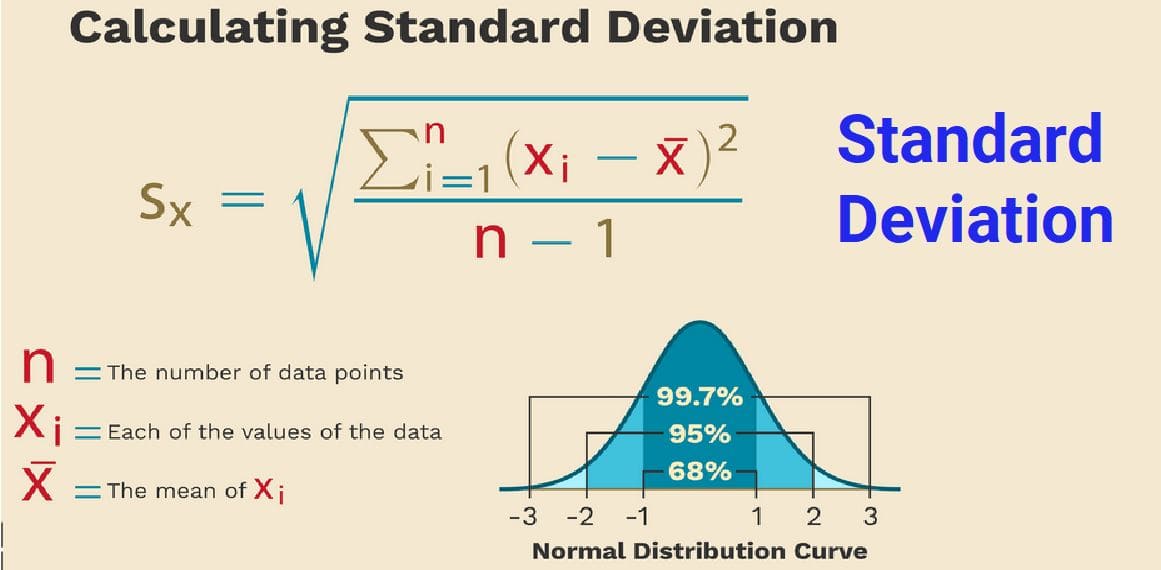

- Errors are normally distributed

🧠 Key Insights

- Linear regression models relationships between variables.

- The regression line predicts outcomes.

- The slope measures how variables influence each other.

- The least squares method finds the best fitting line.

- R² measures how well the model explains the data.

- Linear regression is the foundation of many ML models.