📘 Errors in Hypothesis Testing

Understanding these errors is essential for evaluating the reliability of conclusions.

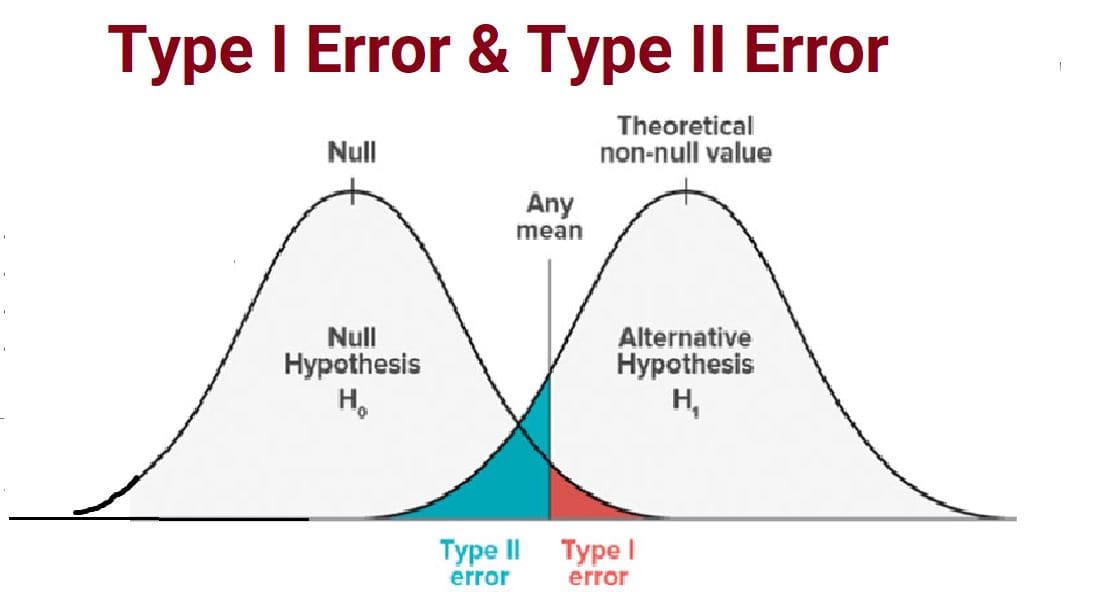

⚖️ The Two Possible Errors

There are two kinds of errors that can occur when making decisions about hypotheses:

- Type I Error

- Type II Error

🟥 Type I Error (False Positive)

A Type I error occurs when we reject the null hypothesis even though it is actually true.

🔎 Meaning

We conclude that an effect exists when in reality there is no effect.

📌 Example — Medical Test

- H₀: Patient does not have disease

- Test result says patient has disease

- But in reality, patient is healthy

This is a false alarm.

📌 Example — Court Trial Analogy

- H₀: Defendant is innocent

- Court declares defendant guilty

- But defendant is actually innocent

This is wrongful conviction.

🟦 Type II Error (False Negative)

A Type II error occurs when we fail to reject the null hypothesis even though it is false.

🔎 Meaning

We conclude there is no effect when in reality an effect exists.

📌 Example — Medical Test

- H₀: Patient does not have disease

- Test result says patient is healthy

- But patient actually has disease

This is a missed diagnosis.

📌 Example — Court Trial Analogy

- H₀: Defendant is innocent

- Court declares defendant innocent

- But defendant is actually guilty

This is a guilty person set free.

📊 Summary Table

| Reality | Decision | Result | Error Type |

|---|---|---|---|

| H₀ True | Reject H₀ | Incorrect | Type I Error |

| H₀ False | Fail to Reject H₀ | Incorrect | Type II Error |

🎯 Probability of Errors

Type I Error Probability (α)

The probability of making a Type I error is called the significance level.

α (alpha) = P(Reject H₀ | H₀ is true)

- Common values: 0.05, 0.01

- Chosen before testing

Type II Error Probability (β)

β (beta) = P(Fail to reject H₀ | H₀ is false)

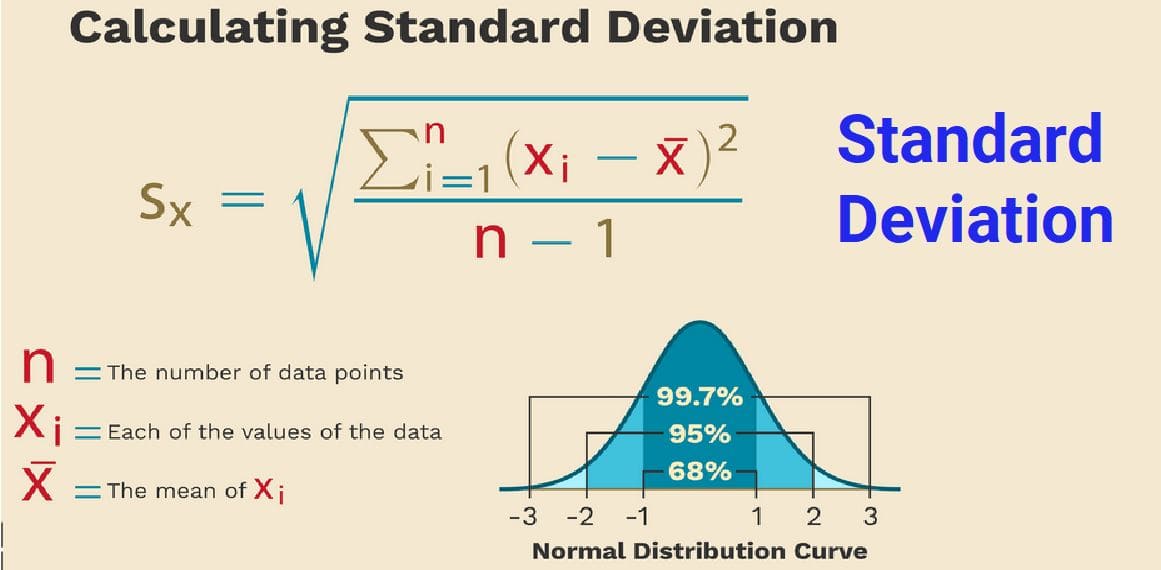

- Depends on sample size and variability

- Harder to calculate directly

⚡ Power of a Test

The probability of correctly rejecting a false null hypothesis is called the power of the test.

Power = 1 − β

🔎 Example

- β = 0.20 → Power = 0.80

- Test correctly detects effect 80% of the time

⚖️ Trade-Off Between Errors

Reducing one type of error often increases the other.

| If we make α very small | Effect |

|---|---|

| Harder to reject H₀ | Type I ↓ but Type II ↑ |

| Easier to reject H₀ | Type I ↑ but Type II ↓ |

🚨 Real-World Importance

Medical Research

- Type I: Approving unsafe drug

- Type II: Rejecting life-saving drug

Manufacturing

- Type I: Rejecting good products

- Type II: Accepting defective products

Machine Learning

- Type I: Claiming model improved when it didn’t

- Type II: Missing genuine improvement

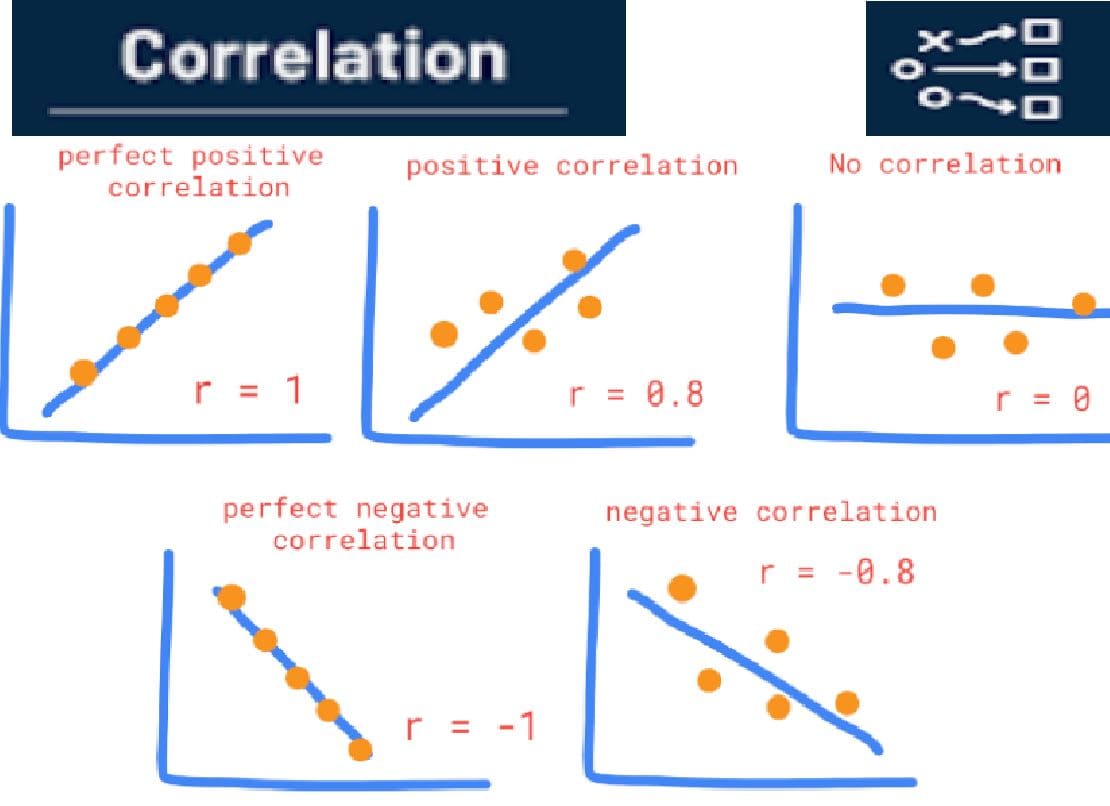

🤖 ML Connection — False Positives & False Negatives

Hypothesis testing errors relate closely to ML classification errors:

| Hypothesis Testing | Machine Learning |

|---|---|

| Type I Error | False Positive |

| Type II Error | False Negative |

🧠 Key Insights

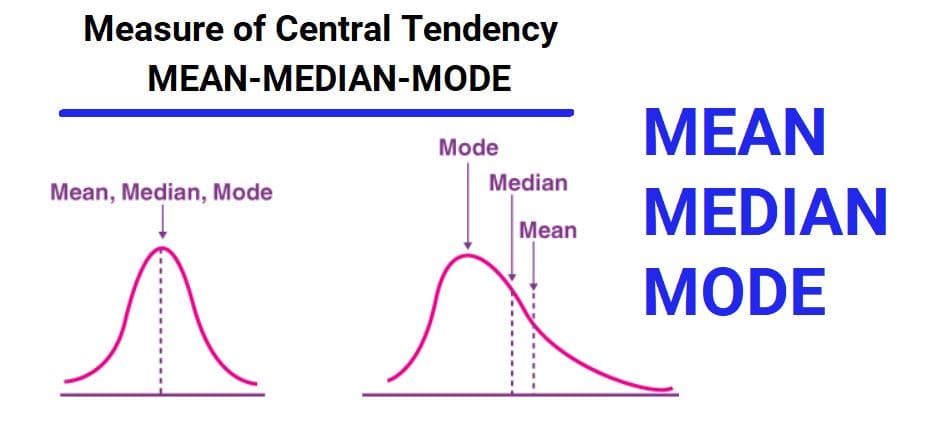

- Statistical decisions can be wrong due to sampling variability

- Type I error: False alarm

- Type II error: Missed detection

- α controls Type I error rate

- Power measures ability to detect real effects

- Error trade-offs must be managed carefully